01 — Overview

What was built

Traditional 3D printing interfaces suffer from a critical gap: operators have limited real-time visibility into the print process and no meaningful way to intervene remotely. I led the design and development of an AR system that addresses this directly, using the Microsoft HoloLens 2 to create an immersive digital twin of the printer, visible and controllable in mixed reality.

The proof-of-concept system overlays live process data, including temperature, filament usage, print progress, directly onto the physical printer, and lets operators start, pause, or stop the print through AR interface controls.

02 — Research

Understanding the problem space

Prior to development, I conducted a literature review, semi-structured interviews with practitioners, and a competitive analysis to establish a clear understanding of operator needs and current tool limitations.

Key Insights

- User Needs: Real-time data visualization, minimal latency, secure access, and simplified remote control.

- Existing Solutions: While applications such as OctoPrint offer web-based monitoring, AR integration remains limited.

- Competitive analysis: Covered OctoPrint, MagicHand, and MIT Media Lab's AR for AM work. OctoPrint offers solid web-based monitoring but no AR. MagicHand adds IoT control via AR but lacks AM-specific integration. MIT's approach uses tablets rather than an immersive headset. None combined immersive interaction with real AM workflows.

Key Functionality Requirements

- Authentication: Restricted access to authorized users.

- Real-Time Monitoring: Displays parameters like temperature, time, and filament usage.

- Simulation: Visualizes the AM process in real time.

- Control Interface: Enables actions like start, pause, and stop remotely.

03 — Development

How it was built

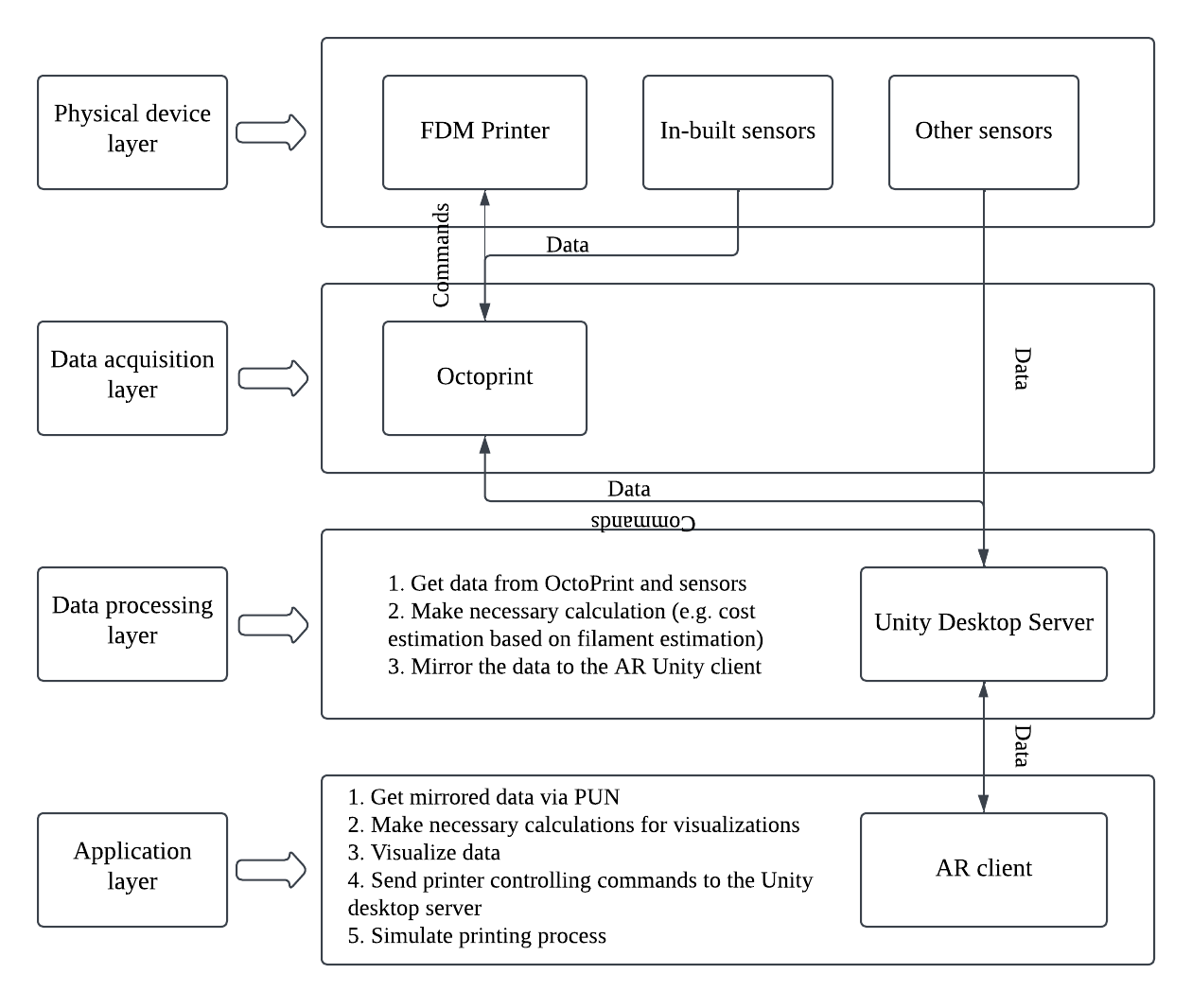

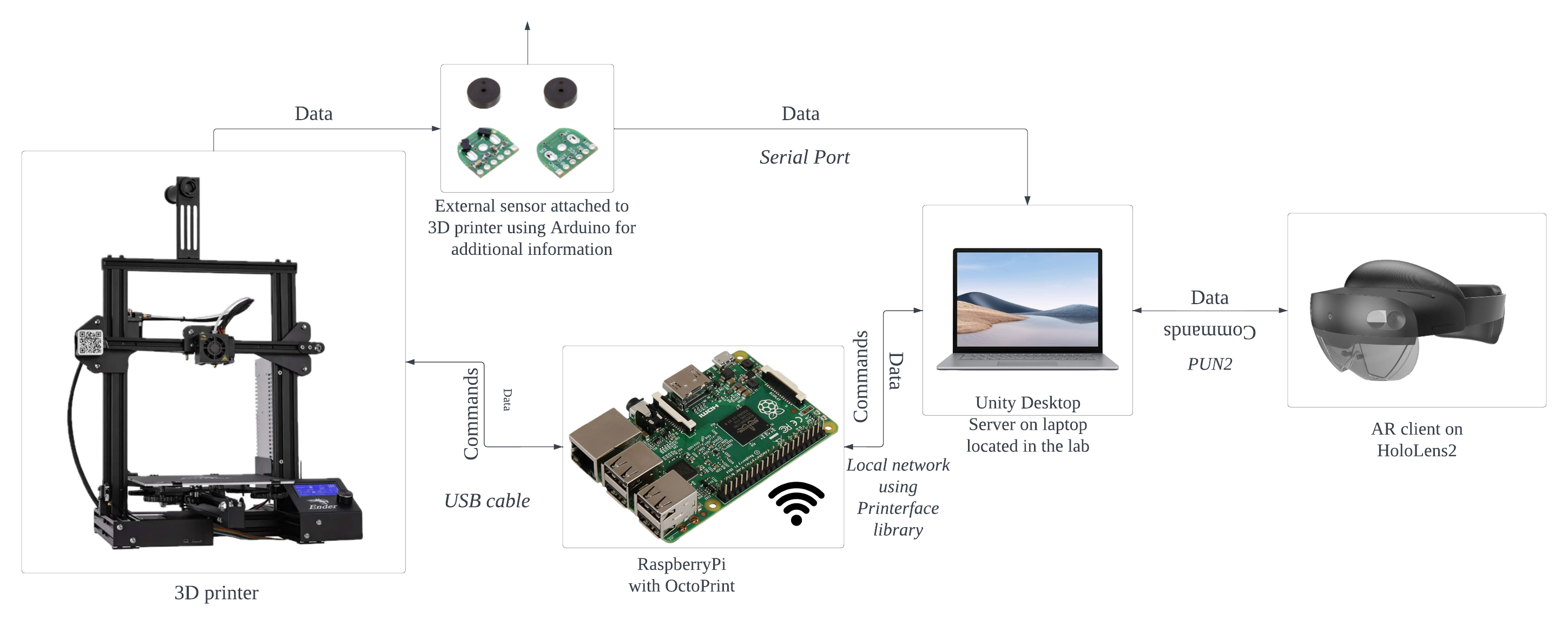

The system runs across four layers: physical (the FDM printer), data acquisition (OctoPrint + Raspberry Pi), data processing (Unity desktop server), and application (HoloLens 2 interface). Data flows from printer to headset in near real-time via Photon Unity Networking (PUN2) for synchronization.

Four-layer system architecture diagram

System communication diagram

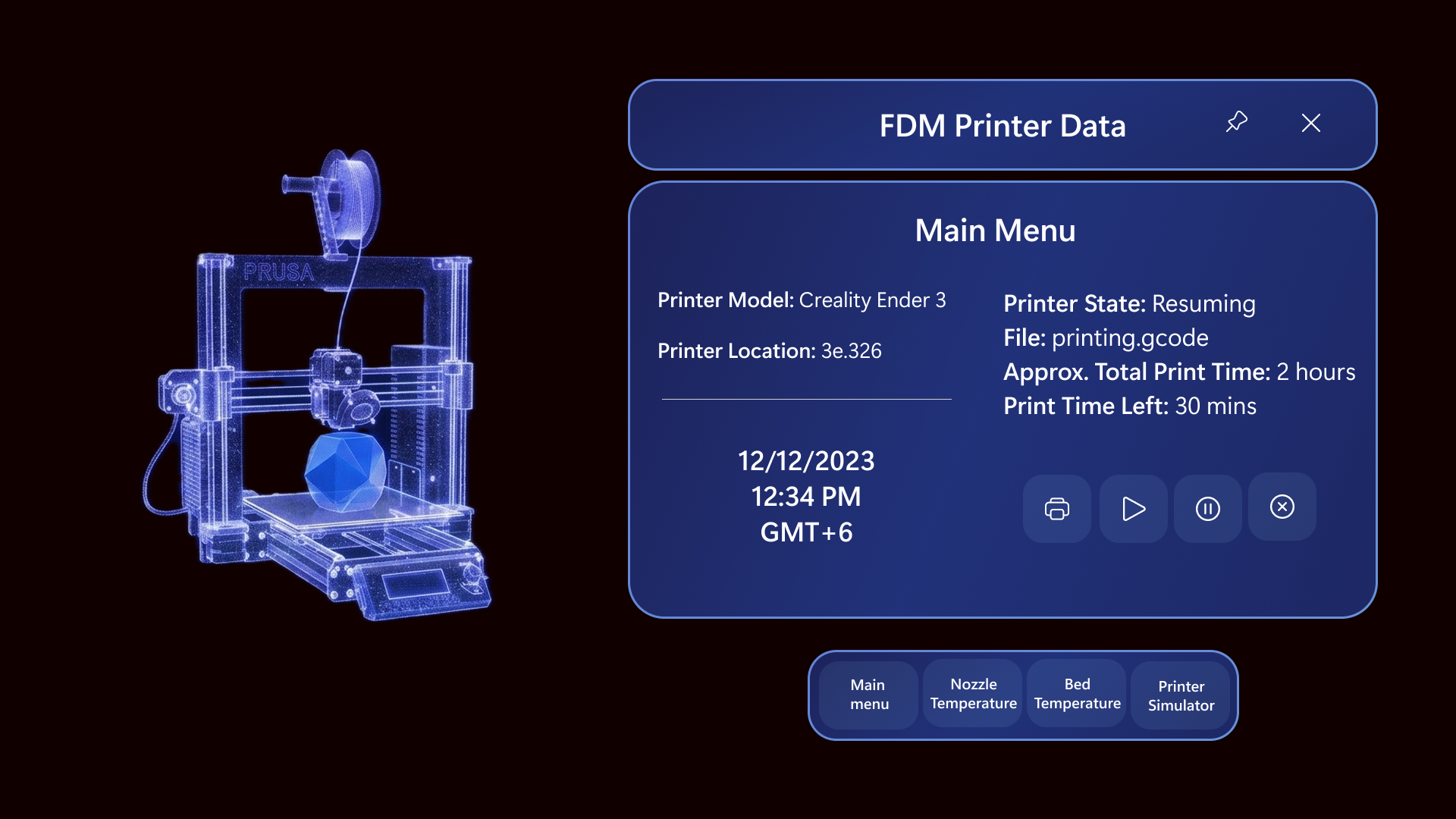

The UI was built using the Mixed Reality Toolkit (MRTK) and Microsoft's design language for AR, with a deliberate focus on simplicity and learnability. The interface features a digital twin visualization of the printer, interactive control buttons, and a live parameter display panel.

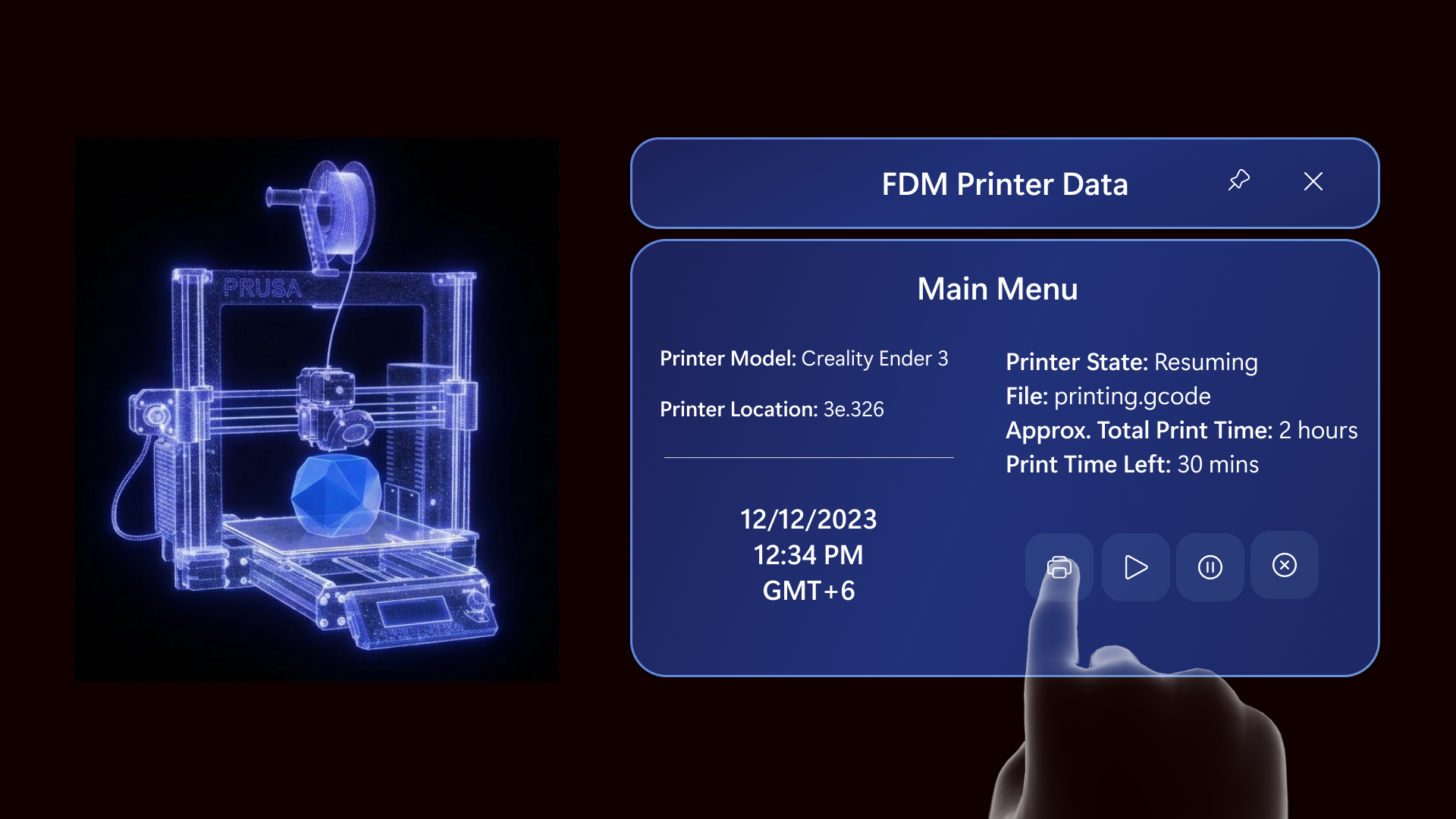

Main Menu UI

Designing for AR meant navigating real hardware constraints: HoloLens 2's field of view, gesture recognition reliability, and the latency introduced by the PUN2 networking layer.

04 — Outcome

What it achieved

This project produced a working proof of concept that demonstrates the viability of AR-assisted remote monitoring for additive manufacturing. Using a HoloLens 2 interface, the prototype surfaced key capabilities like real-time print simulation and remote machine interaction, that are typically inaccessible without physical presence in the lab or factory floor.

Transmission benchmarks showed strong performance at the OctoPrint layer (avg. 0.016 ms, σ ±0.006 ms), with Unity-to-HoloLens latency averaging 936 ms - a baseline that can be improved in the future iterations. The architecture's modular design suggests a viable path toward scalability across different AM devices, and points toward a longer-term vision of digital twin integration.

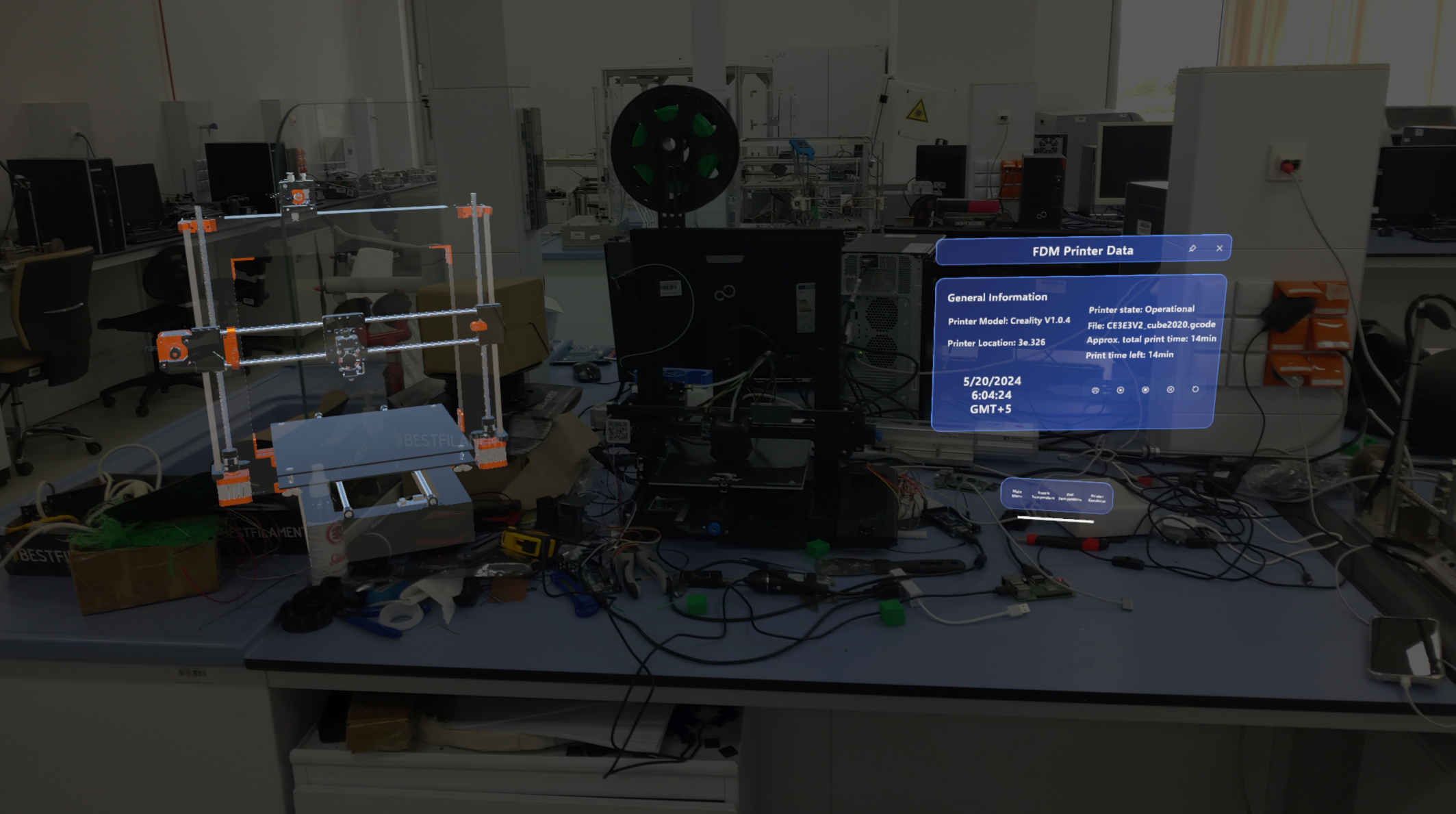

AR control interface — start, pause, stop

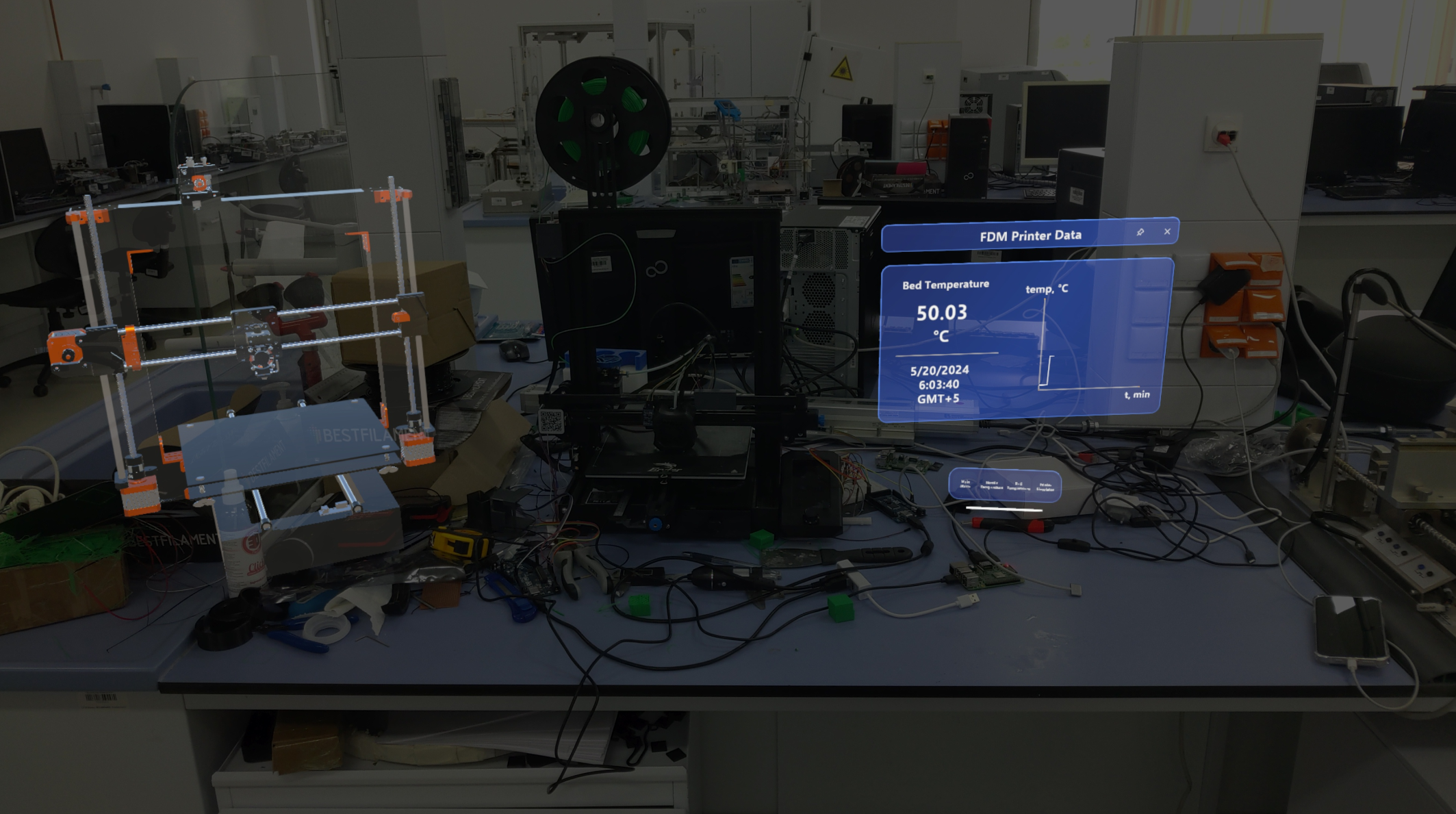

Real-time 3D printer bed temperature monitoring panel

05 — Reflection

What I learned

This project was my first experience with AR systems. Designing and developing across physical and virtual domains required balancing technical and design constraints simultaneously — field of view, latency, and data acquisition each shaped decisions in ways I hadn't anticipated before.

Grounding the work in research was essential from the start. Stakeholder interviews surfaced the functional requirements, while technical exploration defined what was actually feasible. Drawing on literature around cyber-physical systems and HCI helped ensure the system stayed user-centered rather than purely engineering-driven.

If I revisited this project, I would spend more time identifying lower-latency data communication channels, and push further into multi-user co-presence — giving multiple operators a shared AR view for genuinely collaborative remote monitoring.